The Shopify Agentic Plan: How to Get Your Product Data Ready and the Ways Shopify Machine Learning Transforms It Beyond Your Control

Shopify’s Agentic Plan pushes your data to their Catalog API for agentic surface ingestion. Any brand, on any ecommerce platform, can now submit their products to Shopify’s agentic commerce infrastructure and get discovered by AI agents through the Catalog MCP.

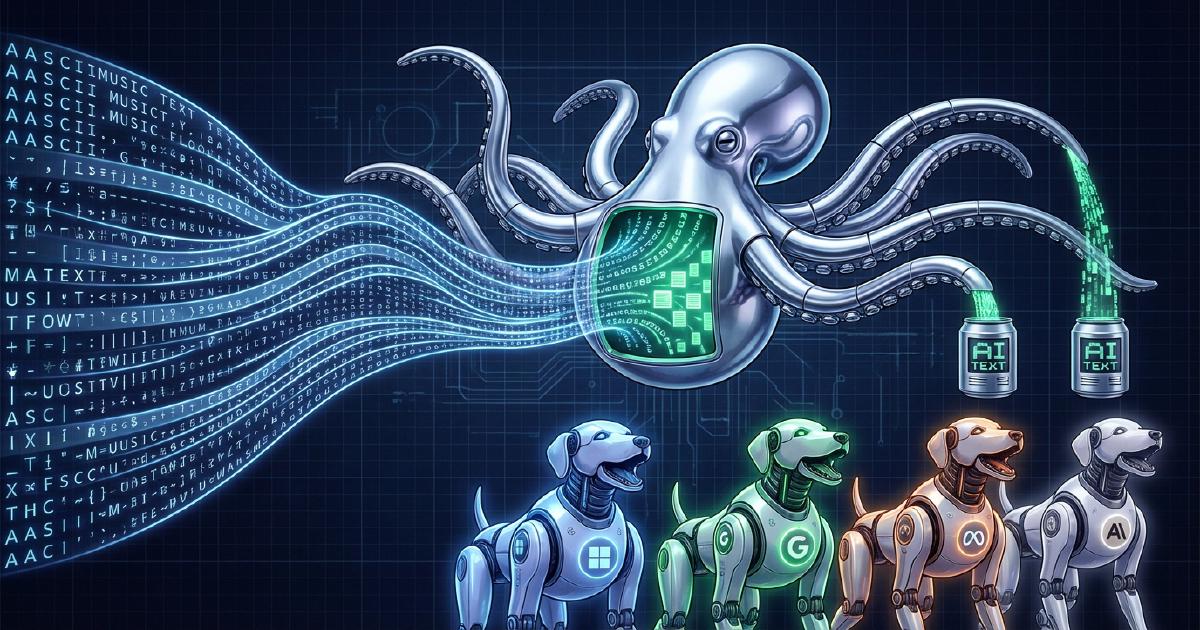

What actually determines how those agents understand and recommend your products is a machine learning pipeline that transforms your product data before it ever reaches an agent. Most brands don’t know this is happening.

This article covers how the transformation works, what you can control going in, and what Shopify’s ML decides on your behalf.

The single most important input to that ML pipeline is your product description. Shopify reads it and generates seven fields that AI agents use when matching your product to buyer queries. The quality of those fields determines whether your product shows up in agent search results at all. And the description field supports up to 512 KB – roughly 67,000 words, more words than The Great Gatsby! Most product descriptions are a few hundred words. That gap is the opportunity. Here is what gets built from your description:

Description

These fields power the Catalog API Search step. Agents rank your product on them before ever reaching your storefront.

How your Product Data Gets Changed by Shopify Machine Learning for the Catalog API

When an agentic surface receives a buyer query, the first thing it uses for semantic matching is not your storefront copy. It is a set of machine-learning fields Shopify generates from your product description. These fields are what agents score and rank your product on during the Catalog API Search step. Whether your product surfaces at all depends on the quality of these seven fields:

Here is what that transformation looks like with Glossier’s Full Orbit eye cream. We pulled the data directly from the Catalog API endpoints and here’s what we found:

Glossier’s description is rich and detailed. That gave the ML enough signal to produce coherent output. The risk is with products where the source material is thinner:

- New product with an undeveloped description. The ML has almost nothing to work from. The inferred fields will be sparse, generic, or wrong. Agents will rank other products ahead of yours.

- Reformulated product with outdated copy. The description still reflects the old formula. The ML generates inferred fields from that stale copy and agents surface the wrong version of your product to buyers.

- Description written as marketing language with few specifics. Phrases like “luxurious formula” and “transformative results” give the ML no extractable features, specs, or use cases. The topFeatures and techSpecs fields come back thin or empty.

- No way to review or correct before agents use it. There is no merchant-facing preview of inferred fields. By the time an agent surfaces your product with a bad uniqueSellingPoint or missing topFeatures, it has already happened.

Every one of these fields is derived from your product description. A thin description produces thin inferred fields. The GraphQL API for Agentic Plan data ingestion supports up to 512 KB, confirmed to accept and store data at that size. For context: 512 KB is roughly 67,000 words, more words than The Great Gatsby. How much of it the Catalog API reads when generating inferred fields is still unknown. Use as much of the allowance as you can. Write for the customer and the model.

Here’s what you control. Here’s what you don’t.

Most of what AI agents receive from the Catalog API is your product data as-is: title, pricing, images, variants, availability, and checkout URL. That is a direct mirror of your Shopify admin.

The seven inferred fields are the exception. They are generated by Shopify’s ML from your product description and other inputs, and they power the initial search matching step. When an agent receives a query like “brightening eye cream for dark circles,” the Catalog MCP runs a Search call that returns products ranked on these inferred fields, not on your raw product description. The Lookup step retrieves full product detail for matched results.

The clearest official confirmation of this came from the developer forum thread “UCP readiness questions” (January through February 2026):

February 3, 2026 — Liam-Shopify (Shopify Staff)

"Properties like uniqueSellingPoint, topFeatures, techSpecs are coming from the catalog, not from the merchant's mapping, or metafields."

Follow-up question — Developer

"How would merchants improve their products attributes for better discovery?"

No reply from Shopify. Here’s the full thread for reference: community.shopify.dev/t/ucp-readiness-questions/28684

The thread also reveals that dev store products are not currently included in the global catalog, which limits merchants’ ability to test inference on new products before they go live.

The actual merchant control surface has three tiers.

Tier 1 (full control): Product-level inputs feed the inference pipeline; Knowledge Base content affects brand and policy responses without going through inference at all.

- Product title, description, images, options, pricing, availability

- Taxonomy category and category metafields

- Combined Listings configuration

- Knowledge Base App content

Tier 2 (partial control): Catalog Mapping lets merchants redirect input sources to metafields or metaobjects. This affects what data enters the ML inference pipeline, not what the pipeline produces.

- Product title source

- Product description source

- Product category source

Tier 3 (no control): Generated by Shopify’s specialized LLMs and served directly to AI agents with no merchant preview, approval, or override.

- uniqueSellingPoint

- topFeatures

- techSpecs

- attributes

The most practical starting point:

- Assign the most specific taxonomy category available

- Populate all category-specific metafields with structured values

- Write descriptions that state features and specifications explicitly in parseable formats

- Populate the Knowledge Base App with accurate FAQs and brand voice content

The merchant control gap over inferred fields is real. The unanswered community question says it plainly, and it’s the biggest gap in Shopify’s agentic commerce tooling right now.

Need a strategy for writing product descriptions that improve visibility in AI surfaces?

WISLR works with brands to audit catalog readiness for agentic commerce, including product description structure, inferred field quality, and Catalog API positioning.

Frequently Asked Questions

Can merchants edit or override inferred fields in Shopify’s Catalog API?

No. There is no direct mechanism.

- uniqueSellingPoint, topFeatures, techSpecs, attributes, and description are all ML-generated

- Shopify staff confirmed in February 2026 that these come from the catalog inference pipeline, not merchant mappings or metafields

- The unanswered follow-up question in the community thread (“how would merchants improve their products attributes for better discovery?”) reflects the current state of documentation on this topic

Is there any way to see what Shopify has inferred for my products?

Not through a standard merchant tool.

- Developers with Catalog API access can query the Search and Lookup endpoints and inspect inferred field values directly

- The Catalog Mapping preview shows input data, not inferred output

- SimGym tests agent behavior end-to-end but does not surface raw inferred field values

- The Storefront MCP does not include the uniqueSellingPoint, topFeatures, or techSpecs fields from the global Catalog MCP